The Penalized Trimmed Least Squares Method for Robust Variable Selection in Multiple Linear Regression

DOI:

https://doi.org/10.29304/jqcsm.2026.18.12433Keywords:

Multiple Linear Regression, Outliers, Weighted Penalized Least Squares, SimulationAbstract

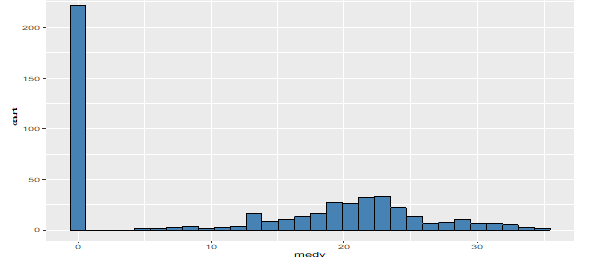

The large number of independent variables often causes problems in the accuracy of the multiple linear regression model. This has motivated researchers to find or select the best model using methods such as forward selection, backward elimination, and stepwise regression. However, these methods have become time-consuming and ineffective when dealing with high-dimensional data. Therefore, statistical literature has proposed the Lasso method and its variants to address these issues. Nevertheless, these methods are sensitive to outliers, which has led to the emergence of several robust studies—particularly focusing on robustifying penalized methods for high-dimensional data, in order to achieve effective variable selection and robust parameter estimation. Among these methods is the Trimmed Penalized Least Squares (RPLTS), which aims to make the Lasso approach more robust against outliers appearing in the dependent variable or in the residuals. However, this method remains sensitive to the presence of leverage points. Accordingly, this research aims to weight the RPLTS method to select the best subset of variables by reducing the influence of leverage points and improving the model’s accuracy. Simulations and real data were used to evaluate the efficiency of the proposed method and compare it with the previous method. The comparison was based on several statistical criteria such as variable selection accuracy, sensitivity to influential variables, specificity of non-influential variables, and mean squared error (MSE) of the model. The method that achieves the highest accuracy, sensitivity, and specificity rates, along with the lowest MSE, is considered superior. The analysis of both real and simulated data demonstrated that the proposed method outperforms the previous one in terms of robustness and efficiency.

Downloads

References

Alfons, A., Croux, C., & Gelper, S. (2013). Sparse Least Trimmed Squares Regression for Analyzing High-Dimensional Large Data Sets. The Annals of Applied Statistics, 7(1), 226–248. doi:10.1214/12-AOAS575..

Arslan, O. (2012). "Weighted LAD-LASSO Method for Robust Parameter Estimation and Variable Selection in Regression". Computational Statistics & Data Analysis, 56(6), 1952-1965.

Belsley, D. A., Kuh, E., & Welsch, R. E. (1980). Regression Diagnostics: Identifying Influential Data and Sources of Collinearity. John Wiley & Sons.

Croux, C. & Haesbroeck, G. (1999). Influence Function and Efficiency of the Minimum Covariance Determinant Scatter Matrix Estimator. Journal of Multivariate Analysis, 71(2), 161–190.

Harrison, D. & Rubinfeld, D. L. (1978). Hedonic Housing Prices and the Demand for Clean Air. Journal of Environmental Economics and Management, 5(1), 81–102.

Kesseku, R. (2021). Robust Variable Selection in Multiple Linear Regression Via Penalized Least Trimmed Squares (Master's thesis, The University of Texas at El Paso).

Kurnaz, F. S., Hoffmann, I., & Filzmoser, P. (2018). Robust and sparse estimation methods for high dimensional linear and logistic regression. Chemometrics and Intelligent Laboratory Systems, 172, 211-222.

Montgomery, Douglas C., Elizabeth A. Peck, and G. Geoffrey Vining. )2012 (Introduction to Linear Regression Analysis. 5th ed., John Wiley & Sons, Inc., Hoboken, New Jersey.

Rousseeuw, P. J. (1984). Least trimmed squares estimator. Technometrics, 26(3), 393–403.

Yohai, V. J. (1987). High breakdown-point and high efficiency robust estimates for regression. The Annals of Statistics, 15(2), 642-656.

Downloads

Published

How to Cite

Issue

Section

License

Copyright (c) 2026 Hassan S. Uraibi, Hassan Ali Abis

This work is licensed under a Creative Commons Attribution-NonCommercial-NoDerivatives 4.0 International License.