Constructing Regression Model Using Penalty Methods to Process High-Dimensional Data for a Factorial Experiment

DOI:

https://doi.org/10.29304/jqcsm.2026.18.12480Keywords:

Bridge, LASSO, ALASSO, SCAD,a factorial experimentAbstract

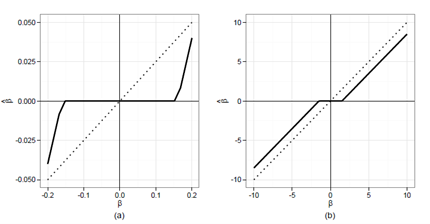

Research addressed high-dimensional data in a 2³ factorial experiment model using a completely randomized design. The columns of matrix (X) represented the effects of factor levels and their interactions, along with the overall arithmetic mean, while the rows represented the number of experimental observations. The high-dimensional data problem has been addressed by using several methods, comparing them according to various criteria. Penalty methods (Bridge, LASSO, ALASSO, SCAD) were employed to select significant factors in the model. Through simulation and application of statistical criteria, the performance of these methods was compared, with results Lasso and Adaptive Lasso show poor performance in most cases, with high MSE and MAD values. CAD generally falls in between, offering better performance compared to Lasso and Adaptive Lasso, but not as good as Bridge.

Downloads

References

Abdelwahab, M. M., Abonazel, M. R., Hammad, A. T., and El-Masry, A. M. (2024). Modified two-parameter Liu estimator for addressing multicollinearity in the Poisson regression model. Axioms, 13(1), 46.

Abonazel, M. R., and Taha, I. M. (2023). Beta ridge regression estimators: simulation and application. Communications in Statistics-Simulation and Computation, 52(9), 4280-4292.

Box, G. E., Hunter, J. S., & Hunter, W. G. (2005). Statistics for experimenters. In Wiley series in probability and statistics. Hoboken, NJ: Wiley.

Buhlmann, P. and Van De Geer, S. (2011). Statistics for High-Dimensional Data: Methods, Theory and Applications. NY: Springer.

Efron, B., & Hastie, T. (2021). Computer age statistical inference, student edition: algorithms, evidence, and data science (Vol. 6). Cambridge University Press.

ElSheikh, A., Abonazel, M. R., & Ali, M. C. (2025). A Review of Penalized Regression and Machine Learning Methods in High-Dimensional Data. The Egyptian Statistical Journal, 69(1), 250-261.

Fan, J., & Li, R. (2001). Variable selection via nonconcave penalized likelihood and its oracle properties. Journal of the American statistical Association, 96(456), 1348-1360.

Frank, L. E., & Friedman, J. H. (1993). A statistical view of some chemometrics regression tools. Technometrics, 35(2), 109-135.

Huang, J., Horowitz, J. L., & Ma, S. (2008). Asymptotic properties of bridge estimators in sparse high-dimensional regression models.

Huang, J., Ma, S. and Zhang, C.-H. (2008). Adaptive Lasso for sparse high-dimensional regression models. Statistica Sinica. 18, 1603–1618.

Hui, F. K. C., Warton, D. I. and Foster, S. D. (2015). Tuning parameter selection for the adaptive lasso using ERIC. Journal of the American Statistical Association. 110(509), 262–269.

James, G., Witten, D., Hastie, T., Tibshirani, R., & Taylor, J. (2023). Statistical learning. In An introduction to statistical learning: With applications in Python (pp. 15-67). Cham: Springer International Publishing.

Montgomery, D.C. (2017), Design and Analysis of Experiments. 9th Edition. John Wily & Sons. Inc New York.

Park, C., & Yoon, Y. J. (2011). Bridge regression: adaptivity and group selection. Journal of Statistical Planning and Inference, 141(11), 3506-3519.

Potscher, B. M. and Schneider, U. (2009). On the distribution of the adaptive LASSO estimator. Journal of Statistical Planning and Inference. 139(8), 2775–2790.

Saleem W.W. and Jaber A. G, (2022). A proposed Penalty Method for Processing High-Dimensional data in a Factorial Experiment (24). Al-Qadisiyah Journal for Administrative and Economic Sciences. Vol(24). No.3., PP .774 -790.

Tibshirani, R. (1996). "Regression shrinkage and selection via the lasso". Journal of the Royal Statistical Society. Series B (Methodological). 58(1), 267–288.

Wang, X. and Wang, M. (2015). Variable selection for high-dimensional generalized linear models with the weighted elastic-net procedure. Journal of Applied Statistics, In press.

Yu, Q. (2025). Adaptive CoCoLasso for High-Dimensional Measurement Error Models. Entropy, 27(2), 97.

Zou, H. (2006). “The Adaptive lasso and Its Oracle properties” Journal of the American statistical Association (JASA) 101 No.476., PP .1418 -1429.

Zou, H., & Li, R. (2008). One-step sparse estimates in nonconcave penalized likelihood models. Annals of statistics, 36(4), 1509.

Abdelwahab, M. M., Abonazel, M. R., Hammad, A. T., and El-Masry, A. M. (2024). Modified two-parameter Liu estimator for addressing multicollinearity in the Poisson regression model. Axioms, 13(1), 46.

Downloads

Published

How to Cite

Issue

Section

License

Copyright (c) 2026 Mahmood M Taher

This work is licensed under a Creative Commons Attribution-NonCommercial-NoDerivatives 4.0 International License.