An LSTM –based approach for predicting the next word in Arabic language

DOI:

https://doi.org/10.29304/jqcsm.2026.18.12502Keywords:

Next word prediction, Arabic language, Deep learning, Recurrent neural network, LSTM, Language modeling, Next word suggestion technique, Natural, language processing, CorpusAbstract

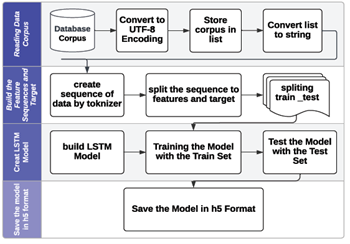

Next word prediction, or language modeling, is a crucial task in natural language processing (NLP) that streamlines the typing process by recommending the subsequent word, hence saving time during conversations. The method of next word prediction is intricate, as the machine must anticipate the user's thoughts. A recurrent neural network (RNN), specifically Long Short-Term Memory (LSTM), comprehends previous text and forecasts subsequent words, aiding the user in sentence construction. This study seeks to employ RNN, specifically LSTM, to forecast the subsequent word in one of the most intricate languages with limited resources (Arabic). The results indicated an accuracy of 97.49% and loss of 0.5155%, suggesting that it is sufficiently effective for predicting subsequent Arabic words.

Downloads

References

D. H. Abd, “Automatic polarity and target extraction from political Arabic text,” Ph.D. dissertation, Computer Science, University of Technology, (2020).

O. F. Rakib, S. Akter, M. A. Khan, A. K. Das, and K. M. Habibullah, “Bangla word prediction and sentence completion using GRU: An extended version of RNN on N-gram language model,” in Proc. 2019 Int. Conf. Sustainable Technologies for Industry 4.0 (STI), Dhaka, Bangladesh: IEEE, (2019), pp. 1–6.

K. Shakhovska, I. Dumyn, N. Kryvinska, and M. K. Kagita, “An approach for a next-word prediction for Ukrainian language,” Wireless Communications and Mobile Computing, vol. 2021, no. 1, Art. no. 5886119, (2021).

H. K. Hamarashid, S. A. Saeed, and T. A. Rashid, “Next word prediction based on the N-gram model for Kurdish Sorani and Kurmanji,” Neural Computing and Applications, vol. 33, no. 9, pp. 4547–4566, (2021).

S. Ambulgekar, S. Malewadikar, R. Garande, and B. Joshi, “Next words prediction using recurrent neural networks,” ITM Web of Conferences, vol. 40, Art. no. 03034, (2021), EDP Sciences.

M. K. Saad and W. Ashour, “Arabic text classification using decision trees,” in Proc. 12th Int. Workshop Computer Science and Information Technologies (CSIT), vol. 2, (2010), pp. 75–79.

S. H. Ghwanmeh, “Applying clustering of hierarchical K-means-like algorithm on Arabic language,” International Journal of Information Technology (IJIT), vol. 3, no. 3, pp. 168–172, (2007).

A. Alduailej and A. Alothaim, “AraXLNet: Pre-trained language model for sentiment analysis of Arabic,” Journal of Big Data, vol. 9, no. 1, Art. no. 72, (2022).

A. Atçılı, O. Özkaraca, G. Sarıman, and B. Patrut, “Next word prediction with deep learning models,” in Smart Applications with Advanced Machine Learning and Human-Centred Problem Design, ICAIAME 2021, Engineering Cyber-Physical Systems and Critical Infrastructures, vol. 1. Cham: Springer, (2023).

H. K. Hamarashid, S. A. Saeed, and T. A. Rashid, “A comprehensive review and evaluation on text predictive and entertainment systems,” Soft Computing, vol. 26, no. 4, pp. 1541–1562, (2022).

M. J. Hofmann, S. Remus, C. Biemann, R. Radach, and L. Kuchinke, “Language models explain word reading times better than empirical predictability,” Frontiers in Artificial Intelligence, vol. 4, Art. no. 730570, (2022).

A. Umfurer, J. E. Kamienkowski, and B. Bianchi, “Using LSTM-based language models and human eye movements metrics to understand next-word predictions,” in Proc. XXII Simposio Argentino de Inteligencia Artificial (ASSAI 2021), JAIIO 50 (Virtual), (2021), pp. 15–24.

M. S. Khorsheed, “Off-line Arabic character recognition–a review,” Pattern Analysis & Applications, vol. 5, no. 1, pp. 31–45, (2002).

B. Brahimi, M. Touahria, and A. Tari, “Data and text mining techniques for classifying Arabic tweet polarity,” Journal of Digital Information Management, vol. 14, no. 1, pp. 15–25, (2016).

E. Abuelyaman, L. Rahmatallah, W. Mukhtar, and M. Elagabani, “Machine translation of Arabic language: Challenges and keys,” in Proc. 2014 5th Int. Conf. Intelligent Systems, Modelling and Simulation, Langkawi, Malaysia: IEEE, (2014), pp. 111–116.

F. Lahrache and S. Djebrit, “Next word prediction based on deep learning,” MS.c thesis, Computer Science, Intelligent Systems for Knowledge Extraction, University of Bounoura, Algeria, (2020).

J. Yang, H. Wang, and K. Guo, “Natural language word prediction model based on multi-window convolution and residual network,” IEEE Access, vol. 8, pp. 188036–188043, (2020).

A. Rianti, S. Widodo, A. D. Ayuningtyas, and F. B. Hermawan, “Next word prediction using LSTM,” Journal of Information Technology and Its Utilization, vol. 5, no. 1, pp. 10–13, (2022).

M. Soam and S. Thakur, “Next word prediction using deep learning: A comparative study,” in Proc. 2022 12th Int. Conf. Cloud Computing, Data Science & Engineering (Confluence), Noida, India: IEEE, (2022), pp. 653–658.

A. Ahmad, M. Idrees, and H. M. Danish, “Text predictor for RTL languages (Urdu, Arabic, Persian, and similar) of Middle East,” Webology, vol. 19, no. 2, pp. 8653–8672, (2022).

D. Endalie, G. Haile, and W. Taye, “Bi-directional long short term memory-gated recurrent unit model for Amharic next word prediction,” PLOS ONE, vol. 17, no. 8, Art. no. e0273156, (2022).

Downloads

Published

How to Cite

Issue

Section

License

Copyright (c) 2026 Heba Adnan Raheem

This work is licensed under a Creative Commons Attribution-NonCommercial-NoDerivatives 4.0 International License.